Happy New Year!

The always-insightful Yuval Levin has a terrific piece in the National Review (no paywall here) that represents the best-scaled mental framework about AI and regulation that I have yet read.

The NR piece expands on Levin’s WSJ op-ed where he argues against a new AI regulatory agency. In that op-ed Yuval points out that generative AI is a general purpose technology like the internet. As such, existing expertise for applications of such a technology already exists in many different agencies.

I totally agree. In fact, some of the proposals for a new AI regulator are simply repurposed from calls for a new internet regulator. (I’m looking at you, former FCC Chair Tom Wheeler). I wrote “Does Big Tech Need its Own Regulator?,” to examine four of those earlier proposals for a new digital agency, and Adam Thierer and I argued against a new AI regulator in the Federalist Society blog under the same logic of that paper.

Yuval’s points in the WSJ are very well made and in a similar vein.

But, even more impressive than writing something I already agree with, Levin’s National Review piece actually says important new things.

Levin is an astute observer of human nature. For example, he calls for more humility from the engineers engaged in “delirious catastrophism about AI.” He observes that engineers are usually less familiar with and therefore more terrified by non-deterministic, high-stakes situations than are politicians and lawyers, and this may color how engineers evaluate potential regulation of the highly stochastic generative AI algorithms. Levin says:

A technology that responds to the same prompt differently every time you pose it and takes analytical steps you didn’t expect is bound to terrify engineers. Overcoming that dread will require the technical experts to exercise some humility: They will need to grasp that public policy deals with terrifying uncertainties all the time, and that sometimes policy-makers might actually be better equipped than engineers to figure out what to worry about and how much.

I’ve repeatedly argued that the engineers who self-select into policy work often think law and society are as deterministic as computer code. As a result, policy wonks with deep technical backgrounds can be some of the best-intentioned advocates of truly terrible ideas. (Except for me, of course. I was inoculated at a junior age by complexity theory.)

We need a “Middle Distance View” of AI. More substantively, Levin calls for a “middle distance” view of artificial intelligence that is “reasonably rooted in the character of the technology as it is taking shape.” To me, this means a view that focuses on the general, policy-relevant characteristics of the technology and industry. Levin argues that experts are too deep in the weeds while policy makers are throwing around generalities (most of which are borrowed from the social media debates, I would argue).

This middle distance view is desperately needed ESPECIALLY for state legislatures where legislation is actually passing. Here is a slide from a presentation I gave to the American Legislative Exchange Council:

I have been working to further flesh this out; Levin has lit a fire under me on this.

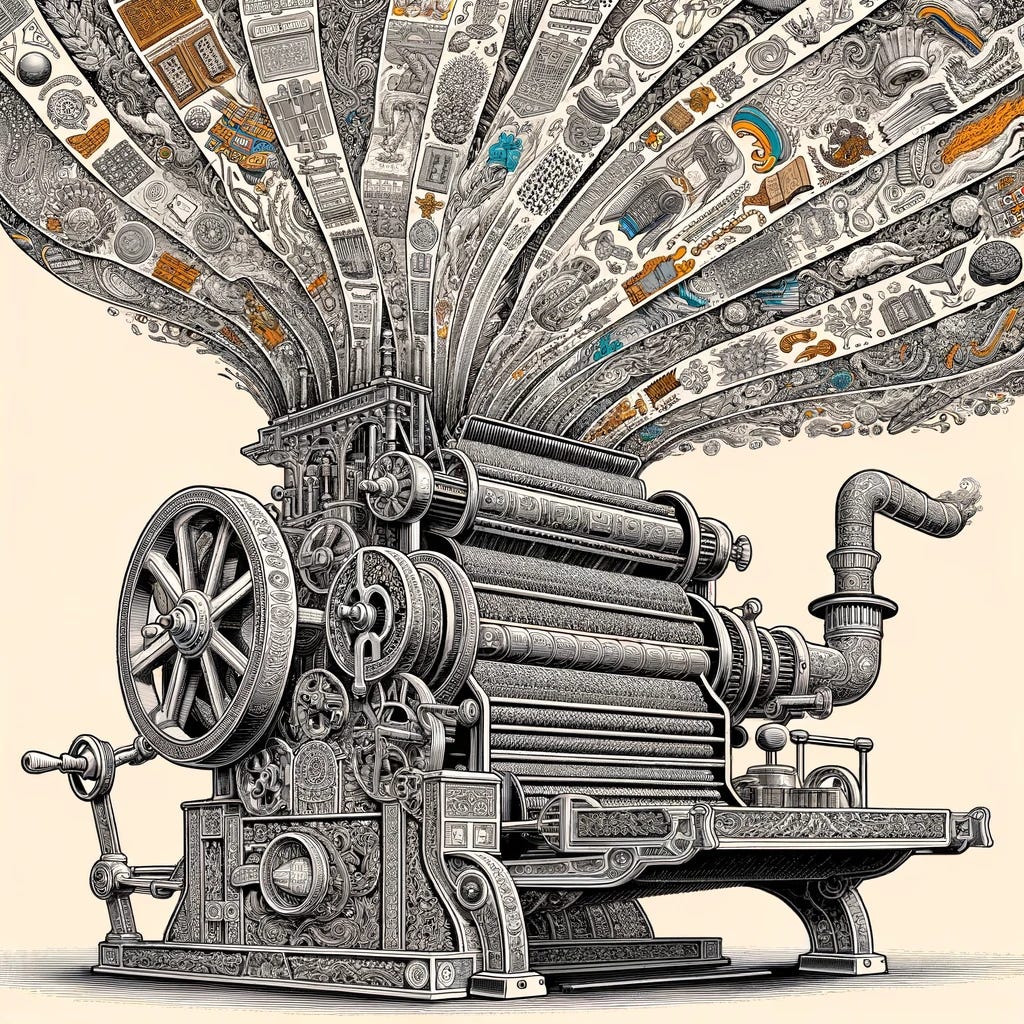

Generative AI is a Tradition Machine. But my favorite observation of the piece, and something that I think is genuinely new in the conversation, is Levin’s argument that generative AI is that it is a tradition machine. Generative AI extends existing patterns. This has different implications for the natural sciences than for patterns generated by humans. I’ll just quote this at length because it’s so clearly stated and important:

Such pattern extension may open truly new vistas in arenas where human beings are not fully cognizant of complex underlying patterns, particularly in some of the natural sciences. In fields like biochemistry, meteorology, and other arenas where researchers struggle to wrap their minds and tools around immense natural complexity, AI has the potential for truly radical breakthroughs.

But in the realm of man-made patterns — in the social sciences, arts, humanities, culture, and most of our everyday experience, such AI could be at least as much a force for continuity, conformity, and conventionality. It may be more of a tradition machine than a breakthrough engine. That doesn’t mean it won’t produce anything new. Traditionalism can be highly generative. It just means it will produce new things in the patterns of existing ones. (emphasis added)

Of course, many people have described generative AI as pattern extenders, and I’ve referred to LLMs as delivering the “average of the internet” before. But Levin draws out deep societal and policy implications of this characteristic. Potential benefits include applying established knowledge and wisdom to new situations and freeing up human creativity to explore new expressions, just “as the emergence of photography led to more radical creativity in the visual arts.” Potential downsides: making society more of what it already is and driving “rigidity or conformity” by delivering the established wisdom in every situation.

Ultimately, Levin believes AI presents less an existential threat and more an existentialist challenge:

The internet has let our society more closely resemble our desires, which has been good and bad in the ways that our desires are. Artificial intelligence may similarly tend to let us become more like we already are and want to be. To worry well about that problem would mean worrying about our desires — which is a very daunting challenge but hardly a novel one.

I think Levin is right that generative AI presents a very human and very old challenge. How do we become who we really want to be within the pressures of society to conform?

I’ll be adding “Tradition Machine” to my list of policy relevant AI characteristics. And I’ll be arguing for our individual, policy, and societal responses adapt accordingly.

* *

Thank you for reading today. I’d love to hear from you: are engineers uniquely afraid of high-stakes nonlinear systems? What do you think of describing generative AI as a tradition machine? Who are you reading on human meaning and desires?