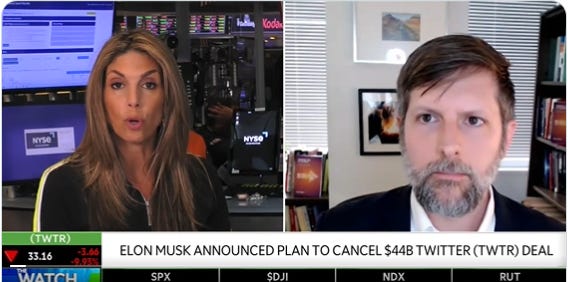

On Monday I spoke with TD Ameritrade’s Nicole Petallides about Elon Musk’s abandonment of his efforts to purchase Twitter, noting that this is terrible for Twitter and likely really expensive for Musk. But in the end, “Twitter needs Elon Musk more than he needs them.” Watch the whole thing here.

As I note in the interview, Twitter has two big challenges: 1) slow growth and low profits despite an outsized usage and impact in elite media and political circles 2) a content-moderation challenge that has generated huge PR and political headwinds. Much of the enthusiasm (and trepidation) around Musk acquiring the company can be explained by the fact that private ownership - and in particular, ownership by Musk - promised that Twitter would take new and necessary risks to solve these problems. Musk promised to experiment with new revenue streams. But perhaps more importantly, he symbolized a “reset” on the political lobbying front and an opportunity for the company to establish principled stances and improve procedures for dealing with user content.

In the rest of this post I’ll describe the importance of solving this latter problem and offer some ideas (drawn from my book) for how Twitter - with or without Musk - could approach this problem of content moderation.

The Content Moderation Problem

Content moderation on social media is the most contentious issue in technology policy today. Americans generally agree that illegal content should not be permitted on Twitter. But when it comes to political topics, the divide is stark. Many on the right fear that these companies are biased against right-of-center users. On the left, many believe that these platforms facilitate hate and normalize extremism. Each side seeks to “work the refs” to get their opponents’ content taken down. As a result, the platforms face conflicting social and political pressure to set and enforce policies.

Both left and right fear that these online platforms hold outsized power over public discourse because so much speech flows through them. Each day, users post billions of pieces of content to popular internet platforms such as Facebook, Twitter, YouTube, and Reddit. The sheer volume of user-contributed content is astounding: On Twitter -- one of the smallest of the major platforms -- users post more than six thousand tweets per second.

As one might expect when dealing with so much content, there are mistakes. Even if content moderators get 99.99 percent of moderation decisions correct, that’s something like six mistakes every ten seconds for Twitter.

But the left and right share a big blind spot when it comes to the content moderation debate: both expect a centralized solutions to address what is a fundamentally a bottom-up, emergent phenomena.

Musk has demonstrated an instinctual desire for open discussion and minimal centralized interference. But, as someone who has also used Twitter’s block button liberally, he surely is aware that individual users and communities make varied choices when it comes to engaging with others. Platforms that obligate users to engage with a firehose of all internet content aren’t particularly sought after by users.

How might have Musk enabled these choices while facilitating an open platform? No one person – not even a genius billionaire – can solve this dilemma. There is no centralized solution to the emergent problem of content moderation.

But, as I describe in my book, there are four complementary tactics that leaders can use to govern complex systems with a minimum of :

Minimize Simplistic Legibility

Temper Ambitious Plans with Prudence and Humility

Reduce the Planner’s Ability to Impose a Plan

Increase the Ability of Participants to Resist Plans or to Shape Them

These tactics are useful in any complex system. How might they apply to social media content moderation? In particular, how they could help Twitter leadership facilitate emergent solutions to this emergent problem? I have some ideas.

Minimize Simplistic Legibility

Authorities seeking to govern a complex system often gather information about the system to make it legible to them. They use such information to form a model that is simpler than the system. And when they use that simplified model to generate rules for the system, they necessarily ignore important information.

For example, social media platforms are full of important, context-specific, illegible information in the millions of communities that have formed on these platforms. The social norms in the Facebook “The Dad Gaming” Facebook group likely differ significantly from the “Retired or thinking retirement?” group and both are likely quite different from the “Environmental Professionals” group. The people in these communities find very different things interesting, off-topic, insulting, or offensive. If Facebook tried to set detailed rules about proper behavior that applied to all groups, such rules could not capture much of the context specific to any single group.

Instead, Facebook and several other social media platforms allow varying degrees of moderation to occur within the community itself. Some platforms provide moderator tools for admitting and removing members. Other “moderation” is simple feedback from fellow members reinforcing or discouraging unaligned behavior, either directly to the individual users or to group administrators.

All major platforms do have baseline standards for user-contributed content, however. It is typically the platform acting on these policies that raises the policy issues mentioned above. Drawing this baseline is challenging, as interest groups lobby to shape these policies. People might lobby Facebook to ban a flat earth group rather than simply not join such a group. Or they might lobby the government to impose such policies on the platforms. The further from the community the source of the rules is, and the more generally applicable such rules are intended to be, the more context the rules will ignore, and the more simplistic the legibility imposed.

Among social media platforms, Twitter is one of the flattest in structure, with only thin options for creating customized groups that include some and exclude others. This means Twitter hasn’t enabled the emergence of smaller communities of users seeking a common goal, and who would share and develop common standards for behavior within that community. Instead, Twitter is one large community with rules that are policed at the network-wide level (such as bans, flags, or takedowns) and norms that are only enforced at the individual level (using blocks and mutes).

Twitter could take advantage of more local, community-level knowledge by adding hierarchies of moderation, or by enabling third-parties to do the same. The most obvious area - and one that is woefully neglected but extremely powerful - is in Twitter’s direct messaging product. Adding better group tools there would foster the development of smaller communities where self-policing can happen.

Temper Ambitious Plans with Prudence and Humility

Critics of how platforms are currently doing content moderation often call for the companies to invest more money, hire more staff, and develop and deploy more sophisticated screening algorithms to deal with content problems. They call for industry-wide consortiums and coordination to ensure problematic content comes down quickly. They seek comprehensive processes for users to appeal moderation decisions. Most of all, they ask for detailed, clear content moderation policies that will provide definitive answers for every situation. Many of these proposals would require sophisticated and ambitious upfront planning. Some of them have been attempted.

Today, the large social media companies have very complex content moderation procedures and policies. But none of these were the result of one-time design and implementation.

Instead, the procedures and the polices have evolved over time, frequently through changes motivated by embarrassing mistakes. The internal policies are frequently updated as new kinds of problematic content are flagged by governments, press, third-party civil society groups, and users. Facebook has even created an institutional process for appeals (see below for more detail) that will help build a body of precedential decisions to guide future moderation decisions—much like common law in a legal setting.

Designing and implementing a comprehensive content moderation plan in one fell swoop is doomed to fail. Incremental, piecemeal experiments cobbled together over time are likely to be more robust and well-tested. For example, in dealing with misinformation, rather than adopting a comprehensive initial plan that attempts to cover all categories of potential misinformation, platforms could identify one narrow category of misinformation to focus on. They could test several different techniques for identifying this type of content and how to deal with it (removal, flagging, account banning).

This would allow platforms to learn from user feedback and understand how misinformation producers react to new policies, and then apply those lessons to broader categories of misinformation.

When platforms talk about their abilities to police content, they should speak with humility. Noting that content moderation is a process that is constantly evolving, that mistakes are essential to learning, and that no one should look solely to the company to police everything – this humility would go a long way in setting expectations. Unfortunately, many platforms spend more time touting their huge investments and implying that this time, they’ve solved the problem.

They haven’t, and they shouldn’t claim to have. Musk found this out rather quickly as he started with bold claims about how he would simply fix content moderation on Twitter but when pushed has offered (often unclear) caveats. Twitter, with or without Musk, should not over-promise about what they can deliver re: twitter’s content moderation.

Reduce the Planner’s Ability to Impose a Plan

Platforms already face some constraints on their ability to impose a plan on their users. Some of these constraints include commercial pressures from consumers, dictates of local law, and investor interests.

One way to further limit a platform’s ability to impose a central plan would be to strengthen any of these constraints. For example, local laws could be changed to limit platforms’ abilities. Of course, such laws themselves have all the potential flaws of centralized decision-making and should be evaluated accordingly. Additionally, in the United States, the First Amendment limits the extent to which the government can compel or forbid the posting of certain kinds of content.

Platforms could themselves limit their own ability to impose certain plans. They could make legally binding promises about how they will use or change the platform. They also could push decision-making out to independent organizations or standards bodies. Facebook has taken an incremental step in this direction with the creation of the Facebook Oversight Board, which is authorized to review specific user appeals of Facebook content moderation actions, such as removal of content or failure to remove content.

Mike Masnick has proposed that companies limit their ability to impose plans by adopting “Protocols, not Platforms.” Protocols are the standardized online recipes for how different computers talk to each other. For example, the SMTP protocol allows a wide variety of different programs (such as Gmail and Outlook) to read and send email. Masnick describes how, over the past twenty years, companies have moved away from using open protocols and toward centralized platforms where the company controls the whole interaction. This increased control has benefits, such as the ability to improve the service more rapidly and the ability to monetize. But these benefits come with “demands for responsibility, including ever greater policing of the content hosted on these platforms,” as Masnick notes. By shifting back toward open protocols, the companies would decentralize content moderation decisions and reduce their ability to interfere.

Yet another technological approach would make certain types of control technically impossible. For example, in 2020 Mark Zuckerberg announced that Facebook would being to emphasize encrypted private chatroom functionality. Facebook would not be able to see encrypted user content, let alone moderate it. Such a self-imposed reduction of legibility would limit Facebook’s ability to review or moderate certain content. Given Twitter’s extremely limited group functions, end-to-end encryption of DMs wouldn’t add a lot — but if they do lean in to DM development (see above), encryption should be on the menu.

Increase the Ability of Participants to Resist Plans or to Shape Them

Users of social media platforms already have a greater ability to resist platforms’ content moderation plans than subjects of a totalitarian government. They can forgo using such platforms, although it might be inconvenient. Many groups have also been able to effectively organize protests against certain social media platform choices using the very social media platforms themselves.

Platforms could, however, enhance the ability of users to resist undesirable plans. Twitter could create tools that empower various users or groups of users to help govern themselves and their communities. Such tools could include coding consumer review and ratings systems, deputizing users, creating more broadly accessible moderator tools, and incentivizing beneficial user behavior. Platforms like Wikipedia, Reddit, and Discord extensively use such tools and benefit from the social norms that have developed around them. These tools enable users to incorporate their knowledge and mētis into the platform and evolve as their group changes.

Masnick’s protocol approach would also empower users by providing them with the ability to pick and choose from a wide range of moderation tools.

Social media companies like Twitter also could further embrace this tactic by making it easy to port their data and connections to another platform (“data portability”) or to access their information on one platform from another (“interoperability”). If users can more easily leave a platform for a competitor, they can more easily resist centralized plans they dislike.

For the same reasons content moderation decisions should be driven downward, we should prefer that data portability and interoperability efforts be driven by parties as close to the applications as possible. That means government mandates are less likely to strike the right benefit/ cost tradeoff.

HOWEVER, government could and should absolutely improve the environment here by removing the draconian penalties (criminal in some cases) that laws like the Computer Fraud and Abuse Act and the Digital Millenium Copyright Act impose on those who would create “adversarial interoperability” — interoperability created without permission of the platform. (I want to work more on this issue and am looking for partners - please reach out.)

--

In sum, Twitter and other social media platforms are deeply involved in complicated schemes to control the content on their platforms. They face political pressure to act and practical challenges of dealing with so much content. It seems Twitter won’t be able to take advantage of private ownership or Musk’s willingness to shake things up. But if Twitter’s leaders want to improve its processes, the tactics above could help.

This post is drawn from Chapter 8 of my book, “Getting Out of Control: Emergent Leadership in a Complex World.”